In DataWeave 2.0 functions are categorized into different modules.

- Core (dw::Core)

- Arrays (dw::core::Arrays)

- Binaries (dw::core::Binaries)

- Encryption (dw::Crypto)

- Diff (dw::util::Diff)

- Objects (dw::core::Objects)

- Runtime (dw::Runtime)

- Strings (dw::core::Strings)

- System (dw::System)

- URL (dw::core::URL)

Functions defined in Core (dw::Core) module are imported automatically into your DataWeave scripts. To use other modules, we need to import them by adding the import directive to the head of DataWeave script, for example:

import dw::core::Strings

import dasherize, underscore from dw::core::Strings

import * from dw::core::Strings

{

"firstName" : "Murali",

"lastName" : "Krishna",

"age" : "26",

“age” : ”26”

}

1. Core (dw::Core)

Below are the DataWeave 2 core functions:

++ , –, abs, avg, ceil, contains, daysBetween, distinctBy, endsWith, filter, IsBlank, joinBy, min, max etc….

result : [0, 1, 2] ++ [“a”, “b”, “c”] will gives us “result” : “[0, 1, 2, “a”, “b”, “c”]”

result : [0, 1, 1, 2] — [1,2] will gives us “result” : “[0]”

result : abs(-20) will gives us “result” : 20

average : avg([1, 1000]) will gives us “average” : 500.5

value : ceil(1.5) will gives us “value” : 2

result : payload contains “Krish” will gives us “result” : true

days: daysBetween(“2016-10-01T23:57:59-03:00”, “2017-10-01T23:57:59-03:00”) will gives us “days”: 365

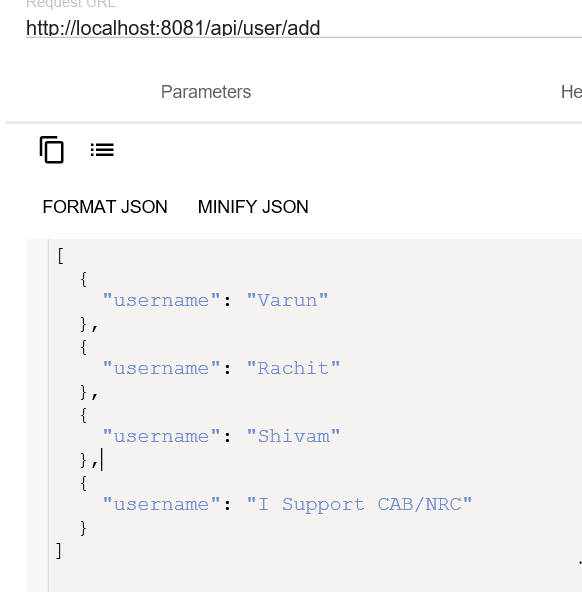

age : payload distinctBy $ will gives us :

{

“firstName” : “Murali”,

“lastName” : “Krishna”,

“age” : ”26”

}

a: “Murali” endsWith “li” will gives us “a” : true

a: [1, 2, 3, 4, 5] filter($ > 2) will gives us “a” : [3,4,5]

empty: isBlank(“”) will gives us “empty” : true

aa: [“a”,”b”,”c”] joinBy “-” will gives us “a” : “a-b-c”

a: min([1, 1000]) will gives us “a” : 1

a: max([1, 1000]) will gives us “a” : 1000

2.Arrays (dw::core::Arrays)

Arrays related functions in DataWeave are :

countBy, divideBy, every, some, sumBy

[1, 2, 3] countBy (($ mod 2) == 0) will gives us 1

[1,2,3,4,5] dw::core::Arrays::divideBy 2 will gives us :

[

[

1,

2

],

[

3,

4

],

[

5

]

]

[1,2,3,4] dw::core::Arrays::every ($ == 1) will gives us “false”

[1,2,3,4] dw::core::Arrays::some ($ == 1) will gives us “true”

[ { a: 1 }, { a: 2 }, { a: 3 } ] sumBy $.a will gives us “6”

3.Binaries (dw::core::Binaries)

Binary functions in DataWeave-2 are:

fromBase64, fromHex, toBase64, toHex

toBase64(fromBase64(12463730)) will gives us “12463730”

{ “binary”: fromHex(‘4D756C65’)} will gives us “binary” : “Mule”

{ “hex” : toHex(‘Mule’) } will gives us “hex” : “4D756C65”

4.Encryption (dw::Crypto)

Encryption functions in Dataweave – 2 are:

HMACBinary, HMACWith, MD5, SHA1, hashWith

{ “HMAC”: Crypto::HMACBinary((“aa” as Binary), (“aa” as Binary)) } will gives us :

“HMAC”: “\u0007£š±]\u00adÛ\u0006‰\u0006Ôsv:ý\u000b\u0016çÜð”

Crypto::MD5(“asd” as Binary) will gives us “7815696ecbf1c96e6894b779456d330e”

Crypto::SHA1(“dsasd” as Binary) will gives us “2fa183839c954e6366c206367c9be5864e4f4a65”

5.Diff (dw::util::Diff)

It calculates difference between two values and returns list of differences.

DataWeave Script:

%dw 2.0

import * from dw::util::Diff

output application/json

var a = { age: “Test” }

var b = { age: “Test2” }

—

a diff b

Output:

{

“matches”: false,

“diffs”: [

{

“expected”: “\”Test\””,

“actual”: “\”Test2\””,

“path”: “(root).age”

}

]

}

Note:

Rest of the things will proceed in Mule – 4 DataWeave Functions Part – 2 article